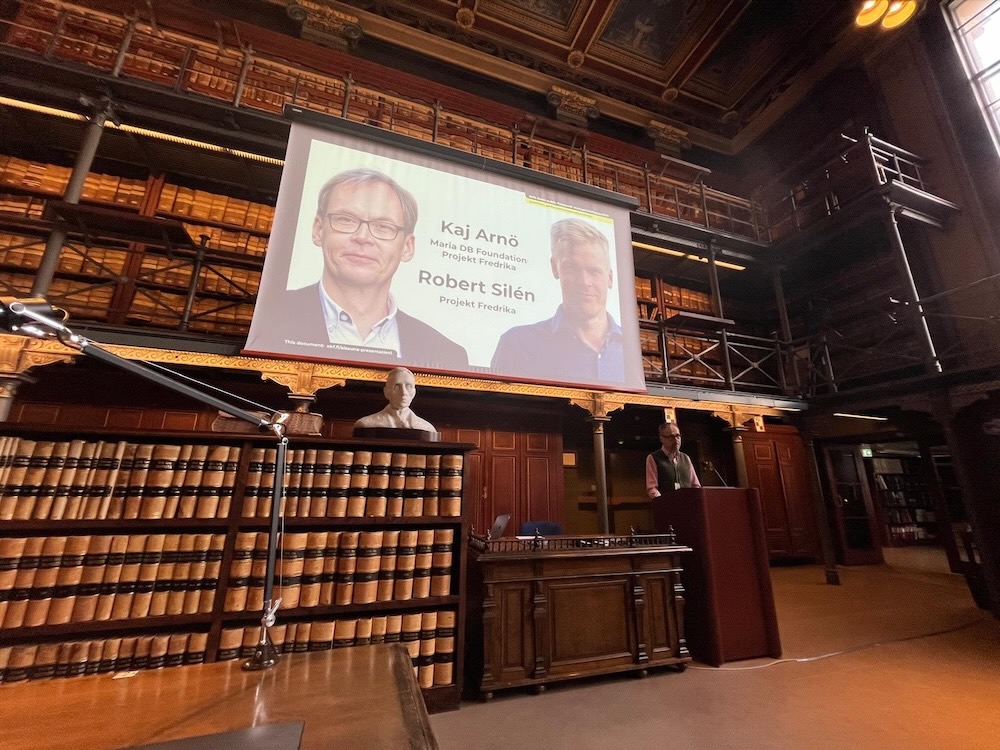

On Monday 6 May 2024, the Finnish open culture organisation AvoinGLAM together with Wikimedia Foundation organised an event called AI Sauna at the National Archives of Finland (Swedish: Riksarkivet; Finnish: Kansallisarkisto) in Finland. Projekt Fredrika had the pleasure of being invited to speak about our Gen AI experiences; it was recorded and uploaded to Youtube.

A classy venue

The fancy venue was in central Helsinki, right next to the venerable Finland’s Bank and even more fancy House of the Estates (Swedish: Ständerhuset; Finnish: Säätytalo). As if the overall location weren’t fancy enough, the presentations were given in the reading room, which I remember having visited as a child when my parents studied genealogical records (archiving has been a big thing in Finland already for centuries).

We wore two (or even three) hats

We are came to the event wearing several hats. The main two were

- MariaDB, which is the database that Wikipedia runs on – my daytime job

- Fredrika, which hosts this blog, and is a Wikipedia project focusing on the representation of Swedish Finland in Wikipedia, in Swedish and other languages – Robert’s daytime job and my pro bono job

We had four topics on Gen AI, or specifically, Large Language Models (ChatGPT, Claude, Llama 2 or so).

- First, I presented ten conclusions about how to use AI to improve the quality of Wikipedia as an encyclopaedia

- Second, I shared three overall goals of our current Wikipedia projects

- Third, I raised the ambition level, to the level of defending Bildung, the liberal Western world order, and share our thought about the role of the database for AI

- Fourth, Robert shared the practical experiences of our AI work so far

Ten prime insights on how to use AI to improve Wikipedia

- use AI to improve the capacity of human wikipedians

- do this by using AI as an infinitely eager junior research assistant,

- but don’t let the junior research assistant update Wikipedia directly, or we will have a situation similar to Lsjbot in Swedish and Cebuano (bulk data of questionable quality)

- start from identifying great sources (high quality books, articles) and then perform two types of analysis on them – NER (Named Entity Recognition), meaning, identify which people, places and other Wikidata Q codes the source material is about, and RAG (Retrieval Augmented Generation), meaning, let a Large Language Model embed the source, vectorise it into an internal AI format

- then compare the vectorised source data with existing Wikipedia articles

- be smart in use of human resources where it matters most, such as by using the number of reads on Wikipedia articles

- use best practices of Generative AI, in the form of prompt engineering, which means tweaking the exact requests that are given to the large language model

- do all of this with existing Wikipedia guidelines as the main priority, in particular NPOV, encyclopaedic language style and high-quality source references

- In this, never forget the appropriate usage of conventional IT and manual Wikipedia updates – in our case, I’ve done Wikipedia edits in 40 languages, and Robert has done more than 50.000 Wikidata edits and 10.000 Wikipedia updates in Swedish alone

- if you follow these guidelines, and add a human subject-matter expert, you can increase the human productivity by about 10x

Our Wikipedia related goals

The goals of our projects are to improve Wikipedia contents and quality in three somewhat related ways:

- one, by increasing the amount of high quality information (fill in holes)

- two, by identifying and correcting low quality and missing NPOV (fix bugs)

- three, by spreading quality information between languages; this is a much more elaborate and difficult process than mere translation, because different language versions have different cultures, practices and habits

Higher ambitions: Remove propaganda from Wikipedia

Now, we think it’s time to raise the ambition level a lot. We think the learnings here have a lot of potential to greatly impact the overall quality of Wikipedia, and, in particular, improve the NPOV. In another Wikipedia project, Projekt Kateryna projektkateryna.org, we are focusing on removing Russian propaganda on Wikipedia, and the most urgent topic here is of course how Ukraine is represented. But Russian propaganda isn’t limited to Ukraine, and propaganda isn’t limited to Russia.

The role of the database

To accomplish this, we need to scale out the work, and no longer work with Google Spreadsheets but with a proper database. And here our overall logic ist that it makes sense for the source data, the AI vector data, and the output data all to be within the same database. For Wikipedia, that’s MariaDB Server.

Thank you

Thank you to Susanna Ånäs for arranging the AI Sauna, to Kimmo Virtanen of Wikimedia Finland and Jonas Jönsson of Wikimedia Sweden for sharing their deep Wikipedia insights, and to the Society of Swedish Literature in Finland for providing the sources and expertise for our Gen AI work – let me mention Johan Pyy and Maria Vainio-Kurtakko by name.

Links from AI Sauna 6 May 2024

- Wiki AI Sauna program

- Robert’s and Kaj’s AI Sauna Speaker Notes

- Robert’s and Kaj’s video presentation on YouTube

Links from Projekt Fredrika

Projekt Fredrika förbättrar täckningen av det svenska i Finland på Wikipedia, främst på svenska men också på andra språk. Läs om oss på Wikipedia (Projektsidan, Project page, Projektisivut, Projektseite, Page du projet, страница проекта, eller följ oss på Twitter, Facebook, Instagram, eller Youtube.